The Threat of AI Deepfakes in Politics

In the digital age, the rise of overseas content farms utilizing artificial intelligence (AI) to create misleading political social media posts has raised significant concerns about the integrity of information online. As elections approach, and the stakes of public perception grow, understanding the mechanisms behind these deepfakes becomes increasingly essential. These fabricated media can sway opinions, disrupt democratic processes, and contribute to a pervasive atmosphere of mistrust.

Deepfakes are a form of synthetic media created using advanced AI techniques that manipulate videos, images, or audio to misrepresent reality. They can range from benign parodies to malicious disinformation. Content farms, especially those operating from overseas, harness these AI capabilities to fabricate realistic yet false portrayals of politicians. For instance, the recent investigation by BBC Wales uncovered numerous deepfake videos depicting UK politicians in compromising situations, such as Welsh politicians endorsing opponents or engaged in scandalous behavior. These fabricated posts can quickly go viral—often masquerading as credible news—to garner significant attention, drawing in viewers who may unknowingly share or endorse the misleading content. As highlighted by Professor Martin Innes of Cardiff University, such pages are often driven by the profit motive, exploiting social media algorithms to maximize engagement.

An illustrative example of this issue can be seen in the multiple fake posts created about prominent figures like Nigel Farage and Rishi Sunak, showcasing them in various outlandish scenarios. Some posts showed Farage behaving in ludicrous ways, such as claiming to adopt pets or making outlandish political statements, creating narratives that can mislead even the most vigilant followers. It’s vital to think critically about what we consume online; consider how misinformation can spread so rapidly in our interconnected world. The increase in deepfake and misleading content calls for better tools and strategies for identifying false claims, especially as entities like the Electoral Commission are developing technologies to tackle these issues, striving to safeguard democratic processes.

In conclusion, as AI technologies evolve, so does the potential for misinformation to affect societal trust and democratic integrity. The proliferation of deepfakes complicates voters' ability to discern credible news from false narratives, demanding more robust scrutiny and action from technology platforms and regulatory bodies. For those wanting to delve deeper into this issue, resources on digital literacy and ethical guidelines surrounding AI can foster better understanding and critical assessment of online information.

Read These Next

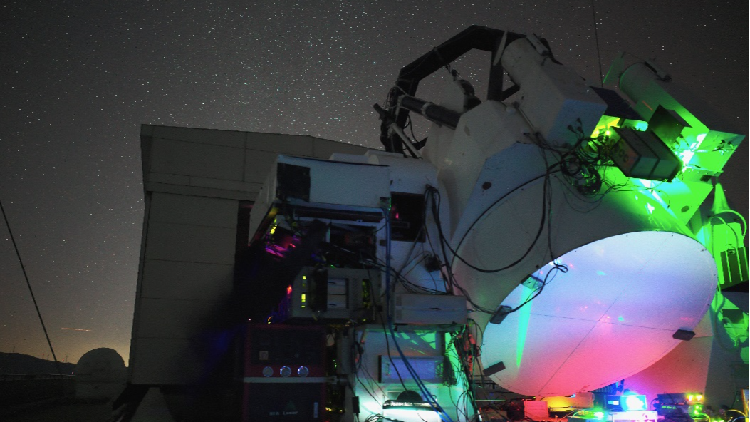

China Breaks Ground in Satellite-Ground Laser Communication

Chinese researchers achieved 1 Gbps laser communication between a satellite and ground, exceeding 40,000 km, for over three hours.

Grammarly's AI Sparks Ethical Debate on Consent

Exploring the ethical implications of Grammarly’s AI feature that mimicked well-known writers, highlighting consent and the quality of AI outputs in creative fields.

Inside China's Weight Management Struggles in Major Hospitals

China's Healthy China 2030 Initiative prioritizes weight management, with hospitals offering services to tackle obesity and improve health.