Grammarly's AI Sparks Ethical Debate on Consent

In recent times, the intersection of artificial intelligence and creative professions has provoked heated discussions, particularly in light of Grammarly’s controversial AI feature that mimicked the writing personas of well-known authors. Following strong backlash from those impersonated, including famous names like Stephen King and Carl Sagan, the feature known as Expert Review was taken down. This incident highlights a critical issue regarding the use of AI in creative fields and raises questions about consent and the ethical implications of AI technology.

Expert Review was designed to give users writing feedback inspired by the styles of renowned authors, but it was met with significant criticism as it used the names of these authors without their consent. As Julia Angwin, an investigative journalist and the lead plaintiff in a class-action lawsuit against Grammarly, stated, she never thought her professional identity could be marketed so casually. This scenario not only sparked legal woes for the tech firm but also brought to light the broader implications of AI technology on the value of human creativity. The legal case could set precedents for how personal likeness and voices can be used in AI applications, especially as the demand for automated and generative tools grows exponentially.

The use of AI-generated content in this way, particularly when it leads to negative or detrimental outcomes—Angwin described the AI’s edit suggestions as "slopperganger"—poses serious questions about the quality and credibility of AI tools. Many are left wondering how to balance the advantages of AI assistance with the risk of diluting authentic human craft. Should tech companies prioritize transparency and consent more stringently? As highlighted by the backlash, the responsibility for obtaining consent isn't only ethically sound; it could also shield companies from serious legal repercussions.

In conclusion, while Grammarly’s Expert Review aimed to leverage the styles of celebrated writers to enhance user experience, it unintentionally crossed ethical boundaries that could have lasting repercussions in the AI landscape. With calls for more ethical AI uses rising, this incident encourages deeper scrutiny into how companies employ AI and the standards they set for respecting the individuals whose work inspires these technologies. Individuals interested in exploring the ethical dimensions of AI integration into creative fields can further investigate resources on digital ethics or AI accountability mechanisms.

Read These Next

The Threat of AI Deepfakes in Politics

The article explores the rising concern of overseas content farms using AI to generate misleading political deepfakes, highlighting their mechanisms, implications for democracy, and the need for improved detection methods.

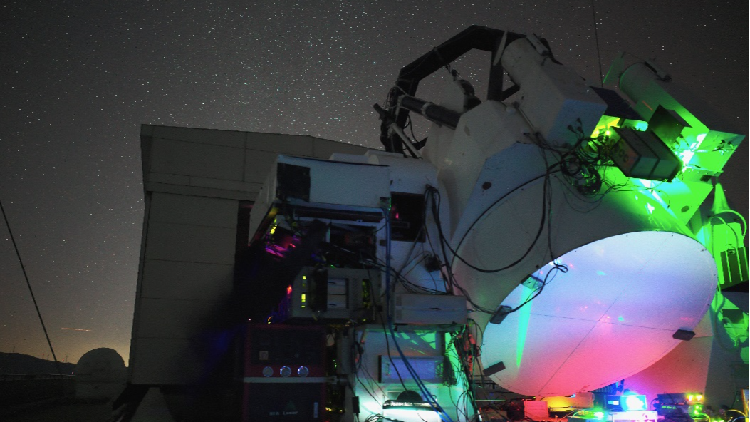

China Breaks Ground in Satellite-Ground Laser Communication

Chinese researchers achieved 1 Gbps laser communication between a satellite and ground, exceeding 40,000 km, for over three hours.

Inside China's Weight Management Struggles in Major Hospitals

China's Healthy China 2030 Initiative prioritizes weight management, with hospitals offering services to tackle obesity and improve health.