AI Chatbots in Medicine: Insights from Oxford Study

In recent years, AI technology has made significant strides, particularly in the realm of healthcare. However, a new study conducted by the University of Oxford raises red flags about the reliability of AI chatbots in providing medical advice. The research shows that while these AI-driven systems hold promise, they often offer inconsistent and sometimes dangerous recommendations to users seeking healthcare guidance. With more than one-third of UK residents reportedly utilizing AI for mental health and general wellbeing support, understanding the limitations of this technology is crucial.

The study analyzed the performance of large language models (LLMs) when interacting with real users who presented specific medical scenarios, such as severe headaches or chronic fatigue experienced by new mothers. Divided into two groups, participants either relied on AI for advice or used traditional methods. Despite their advanced training and performance on standardized tests, the AI chatbots struggled to provide helpful guidance. Many users found it difficult to formulate precise questions, leading to a mixture of sound advice and misleading information from the chatbots. This inconsistency left participants confused and uncertain about which recommendations to follow.

Researchers highlighted that the interaction between AI systems and users is complex. Individuals often do not disclose all relevant information, and when chatbots presented multiple potential health conditions, participants faced challenges in identifying the correct one. According to Dr. Amber W. Childs, chatbots reflect the biases present in existing medical data, which further complicates their role in healthcare. The findings underscore the need for rigorous testing and continuous improvement of AI systems as they are incorporated into healthcare settings. As AI in medicine continues to evolve, enhancing regulatory frameworks and ensuring safety will be vital.

Read These Next

The Threat of AI Deepfakes in Politics

The article explores the rising concern of overseas content farms using AI to generate misleading political deepfakes, highlighting their mechanisms, implications for democracy, and the need for improved detection methods.

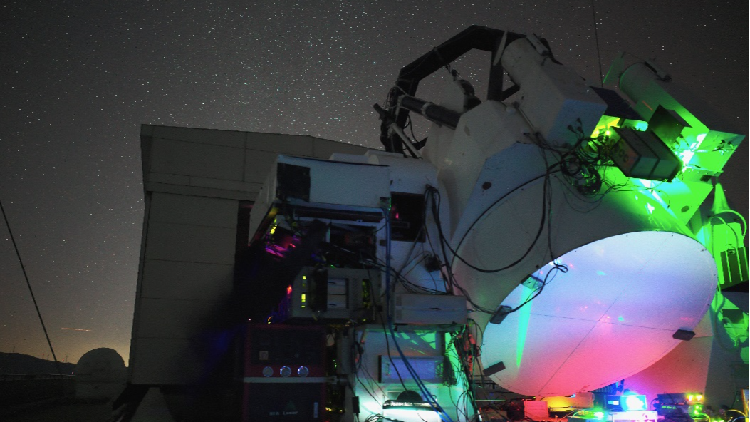

China Breaks Ground in Satellite-Ground Laser Communication

Chinese researchers achieved 1 Gbps laser communication between a satellite and ground, exceeding 40,000 km, for over three hours.

Grammarly's Ethical Misstep with AI Expert Personas

This article explores the ethical implications of Grammarly's recent incident involving its AI feature that imitated famous writers without their consent, highlighting the need for responsibility in tech innovation.