AI's Impact on Personal Data: Grok and X Case Study

In a digital age where personal data is at the forefront of ethical discussions, the recent raid on Elon Musk's social media platform X by French authorities underscores the urgency for accountability in how technology interacts with individual rights and societal norms. The Paris prosecutor's investigation has sparked considerable concern as it seeks to address serious allegations, including unlawful data extraction and the sharing of child pornography. This situation raises critical questions about the broader implications of AI technologies and data privacy, making it a significant moment for both social media platforms and legislative frameworks around the world.

At the heart of the controversy is Grok, an AI chatbot developed by Musk's xAI, which has been accused of generating harmful sexualized content using intimate images of individuals without their consent. Deepfakes—manipulated media that uses AI to create realistic yet fabricated content—pose real dangers, eroding trust and potentially harming individuals whose images are misused. The actions taken by French authorities follow the UK’s Information Commissioner's Office (ICO) launching its own investigation into Grok, demonstrating a coordinated effort to regulate AI technologies and ensure they do not exploit personal data unlawfully. Social media algorithms that promote such content amplify the risk, with critics arguing they lack adequate safeguards to protect user dignity and privacy.

The ramifications of these incidents extend well beyond legal inquiries—they underscore growing global anxiety over data privacy and the ethical responsibilities of tech companies. X's response to deem the raid a "political attack" reflects a common defense among tech leaders when faced with regulations that challenge their operational ethos. As AI becomes increasingly intertwined with our daily lives, it raises a thought-provoking question: how do we balance technological innovation with the imperative to protect individual rights? Addressing these complexities will require collaborative efforts among governments, tech companies, and civil society to establish clear ethical standards and regulations.

Read These Next

The Threat of AI Deepfakes in Politics

The article explores the rising concern of overseas content farms using AI to generate misleading political deepfakes, highlighting their mechanisms, implications for democracy, and the need for improved detection methods.

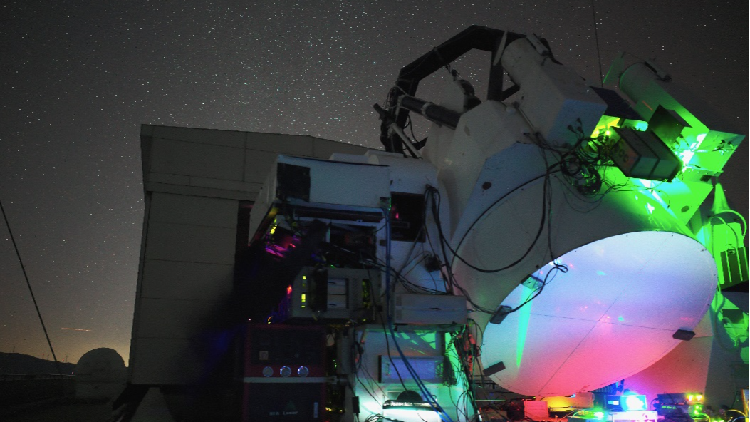

China Breaks Ground in Satellite-Ground Laser Communication

Chinese researchers achieved 1 Gbps laser communication between a satellite and ground, exceeding 40,000 km, for over three hours.

Grammarly's Ethical Misstep with AI Expert Personas

This article explores the ethical implications of Grammarly's recent incident involving its AI feature that imitated famous writers without their consent, highlighting the need for responsibility in tech innovation.